Professional Documents

Culture Documents

Implementing HR Analytics - Universal Adaptors

Uploaded by

SrikanthKasayaCopyright

Available Formats

Share this document

Did you find this document useful?

Is this content inappropriate?

Report this DocumentCopyright:

Available Formats

Implementing HR Analytics - Universal Adaptors

Uploaded by

SrikanthKasayaCopyright:

Available Formats

Implementing HR Analytics using Universal Adaptors

Implementing HR

Analytics using

Universal Adaptors

- A technical documentation of various aspects of the product as applies to

Oracle Business Intelligence Applications HR Universal Adaptors

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

1.

IMPLEMENTING HR ANALYTICS UNIVERSAL ADAPTORS............................................. 4

2.

GENERAL BACKGROUND OF UNIVERSAL ADAPTORS ....................................................... 5

3.

GENERAL IMPLEMENTATION CONSIDERATIONS ............................................................... 6

4. KNOW YOUR STEPS TOWARDS A SUCCESSFUL HR ANALYTICS IMPLEMENTATION

FOR UNIVERSAL ADAPTOR ................................................................................................................ 8

5.

IMPACT OF INCORRECT CONFIGURATIONS OF DOMAIN VALUES .............................. 10

6.

IMPACT OF INCORRECT POPULATION OF CODE-NAME COLUMNS ............................ 12

7.

BEST PRACTICES FOR EXTRACTING INCREMENTAL CHANGES ................................... 13

8.

DETAILED UNDERSTANDING OF THE KEY HR ETL PROCESSES .................................. 15

8.1.

Core Workforce Fact Process ................................................................................................................... 15

8.1.1.

ETL Flow .................................................................................................................................................15

8.1.2.

Workforce Fact Staging (W_WRKFC_EVT_FS) .......................................................................................18

8.1.3.

Workforce Base Fact (W_WRKFC_EVT_F) .............................................................................................20

8.1.4.

Workforce Age Fact (W_WRKFC_EVT_AGE_F) ......................................................................................21

8.1.5.

Workforce Period of Work Fact (W_WRKFC_EVT_POW_F) ..................................................................22

8.1.6.

Workforce Merge Fact (W_WRKFC_EVT_MERGE_F) .............................................................................23

8.1.7.

Workforce Month Snapshot Fact (W_WRKFC_EVT_MONTH_F) ...........................................................24

8.1.8.

Workforce Aggregate Fact (W_WRKFC_BAL_A) ....................................................................................25

8.1.9.

Workforce Aggregate Event Fact (W_WRKFC_EVT_A) ..........................................................................27

8.1.10.

Handling Deletes ....................................................................................................................................29

8.1.11.

Propagating to derived facts ..................................................................................................................30

8.1.12.

Date-tracked Deletes .............................................................................................................................30

8.1.13.

Purges ....................................................................................................................................................30

8.1.14.

Primary Extract ......................................................................................................................................31

8.1.15.

Identify Delete .......................................................................................................................................31

8.1.16.

Soft Delete .............................................................................................................................................32

8.1.17.

Date-Tracked Deletes - Worked Example ..............................................................................................33

8.2.

Recruitment Fact Process ........................................................................................................................ 34

8.2.1.

ETL Flow .................................................................................................................................................34

8.2.2.

Job Requisition Event & Application Event Facts (W_JOB_RQSTN_EVENT_F & W_APPL_EVENT_F) ....36

8.2.3.

Job Requisition Accumulated Snapshot Fact (W_JOB_RQSTN_ACC_SNP_F) ........................................37

8.2.4.

Applicant Accumulated Snapshot Fact (W_APPL_ACC_SNP_F) .............................................................38

8.2.5.

Recruitment Pipeline Event Fact (W_RCRTMNT_EVENT_F) ..................................................................39

8.2.6.

Recruitment Job Requisition Aggregate Fact (W_RCRTMNT_RQSTN_A) ..............................................42

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

8.2.7.

8.2.8.

Recruitment Applicant Aggregate Fact (W_RCRTMNT_APPL_A)...........................................................44

Recruitment Hire Aggregate Fact (W_RCRTMNT_HIRE_A) ....................................................................45

8.3.

Absence Fact Process .............................................................................................................................. 47

8.3.1.

ETL Flow .................................................................................................................................................47

8.3.2.

Absence Event Fact (W_ABSENCE_EVENT_F) ........................................................................................49

8.4.

Learning Fact Process .............................................................................................................................. 52

8.4.1.

ETL Flow .................................................................................................................................................52

8.4.2.

Learning Enrollment Accumulated Snapshot Fact (W_LM_ENROLLMENT_ACC_SNP_F) ......................54

8.4.3.

Learning Enrollment Event Fact (W_LM_ENROLLMENT_EVENT_F) ......................................................55

8.5.

Payroll Fact Process ................................................................................................................................. 56

8.5.1.

ETL Flow .................................................................................................................................................56

8.5.2.

Payroll Fact (W_PAYROLL_F) .................................................................................................................58

8.5.3.

Payroll Aggregate Fact (W_PAYROLL_A) ................................................................................................59

9.

KNOWN ISSUES AND PATCHES ............................................................................................... 60

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

1. Implementing HR Analytics Universal Adaptors

The purpose of this document is to provide enough information one might need while attempting an

implementation of HR Analytics using the Oracle BI Applications Universal Adaptors. There are several

myths around what needs to be done while implementing Universal Adaptors, where can things go

wrong if not configured correctly, what columns are to be populated as a must, how to provide delta

data set while shooting for incremental ETL runs and so on. All of these topics are discussed in this

document.

Apart from understanding the entry points that are required to implement HR Analytics, it also helps to

know the process details of some key components of HR Analytics. A few of these key facts and

dimensions are also discussed and an overview of their process/usages is provided towards the end.

Knowing the list of files/tables to be populated (entry points), the grain of such tables, the kind of data

that they expect, has always been a key pain for the implementers. This is also discussed vividly and an

excel spreadsheet (HR_Analytics_UA_Lineage.xlsx) is provided along with this document that addresses

all such needs.

This document is intended for Oracle BI Applications Releases 7.9.6, 7.9.6.1, 7.9.6.2 as well as 7.9.6.3.

For upcoming releases, this document will be updated in due course of time.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

2. General Background of Universal Adaptors

Oracle BI Applications provide packaged ETL mappings against source OLTP systems like Oracle EBusiness Suites, PeopleSoft, JD Edwards and Siebel across various business areas such as Human

Resources, Supply Chain & Procurements, Order Management, Financials, Service and so on. However,

Oracle BI Applications does acknowledge that there can be quite a few other source systems, including

home-grown ones, typically used by SMB customers. And to that extent, some of the enterprise

customers may also be using SAP as their source. Until it gets to a point where Oracle BI Applications can

deliver pre-built ETL adaptors against each of these source systems, the Universal Adaptor becomes a

viable choice.

A mixed OLTP system where one of them has pre-built adaptor for and the other doesnt is also a

scenario that calls for the usage of Universal Adaptors. For instance, the core portion of Human

Resources may be in PeopleSoft systems, but the Talent Management portion may be maintained in a

3rd party system like Taleo.

In order for customers to enable pulling in data from non-supported source systems into the Data

Warehouse, Oracle BI Applications have created a so called Universal Adaptor. The reason this was

doable in the first place was the fact that the ETL architecture of Oracle BI Applications had the evident

support for this. Oracle BI Applications Data Warehouse consists of a huge set of facts, dimensions and

aggregate tables. The portion of the ETL that loads to these end tables are typically Source

Independent (loaded using the Informatica folder SILOS). These ETL maps start from a staging table and

load data incrementally into the corresponding end table. Aggregates are created upstream, and have

no relation to which source system the data came from. The ETL portion, Source Dependent Extract,

that extracts into these staging tables (also called Universal Stage Tables) are the ones that go against a

given source system, like EBS or PSFT and so on. For Universal, they go against a similarly structured CSV

file. Take any Adaptor the universal stage tables are exactly the same structurally. The grain

expectation is also exactly the same for all adaptors. And no wonder, while all these conditions are met,

the SILOS part will load the data (extracted from Universal) from the universal stage tables seamlessly.

Why did Oracle BI Applications decide to source from CSV files? In short, the answer to this is to

complete the end-to-end extract-transform-load story. We will cover this in a bit more details and what

the options are, in the next section.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

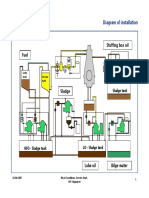

3. General Implementation Considerations

One myth that implementers have while implementing Universal Adaptors is that: Data for the universal

staging tables should always be presented to Oracle BI Applications in the required CSV file format.

If your source data is already present in a relational database, why dump it to CSV files and give it to

Oracle BI Applications? You will anyway have to write brand new ETL mappings that read from those

relational tables to get to the right grain and right columns. Then why target those to CSV files and then

use the Oracle BI Applications Universal Adaptor to read from them and write to the universal staging

tables? Why not directly target those custom ETL maps to the universal staging tables? In fact, when

your source data is in relational tables, this is the preferred approach.

OLTP

(Relational DB)

Custom Extract

to files

Data Warehouse

Custom Extract

to staging tables

CSV Files

Universal Adaptor

to staging tables

However, if your source data comes from 3rd party sources which you have outsourced, and probably

have agreements with them to send you data files/reports once in a while, and if that 3rd party source

doesnt allow you to access their relational schema, then probably CSV files is the only alternative. A

typical example would be Payroll data. A lot of organizations typically outsource their Payroll to 3rd party

companies like ADP systems and so on. In those cases, ask for the data in the same manner that you

expect in the Oracle BI Applications CSV files.

Also, if your source data lies in IBM mainframe systems, where it is typically easier to write COBOL

programs or whatever to extract the data in flat files, presenting CSV files to Oracle BI Applications

Universal Adaptor is probably easier. Irrespective of how to populate the universal staging tables

(relational sources or CSV sources) five very important points should always be kept in mind:

Grain of the universal staging tables are met properly.

The uniqueness of records do exists in the (typically) INTEGRATION_ID columns.

The mandatory columns are populated the way they should be.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

The relational constraints are met well while populating data for facts. In other words, the

natural keys that you provide in the fact universal staging table, must exist in the dimensions.

This is with respect to the FK resolution (dimension keys into the end fact table) topic.

Incremental extraction policy is well set up. Some overlap of data is OK, but populating the

entire dataset to the universal staging tables will prove to be non-performing.

Note: For the rest of the document, we will assume that you are going the CSV file approach, although

re-iterating, it is recommended that if your source data is stored in a relational database you should

write your own extract mappings.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

4. Know your steps towards a successful HR Analytics implementation

for Universal Adaptor

There are several entry points while implementing HR Analytics using Universal Adaptors. The base

dimension tables and base fact tables have their corresponding CSV files where you should configure the

data at the right grain and expectations. Other kinds of tables include Exchange Rate and Codes.

Exchange Rate (W_EXCH_RATE_G) has its own single CSV file, whereas the Codes table (W_CODE_D) has

a CSV file, one per each code category. To get to see all code-names well enough in the

dashboards/reports, you should configure all the required code CSV files *the list of such files are

provided in the associated spreadsheet (HR_Analytics_UA_Lineage.xlsx)].

Common HR

Dimensions

Fact Specific

HR Dimensions

HR Event

Dimensions

Code

Dimensions

Other Common

Dimensions

Common Class

Dimensions

Workforce

Fact

Other

Facts

Domain CSV

Files

Key points:

Start with populating the core HR dimension CSV files, like Employee, Job, Pay Grade, HR

Position, Employment etc.

Then configure subject area specific HR dimensions, like Job Requisitions, Recruitment Source

etc (when implementing Recruitment) or Learning Grade, Course (when implementing Learning)

or Pay Type etc (when implementing Payroll) or Absence Event, Absence Type Reason (when

implementing Absence) and so on.

Two important HR events dimensions should go next. These are Workforce Event Type

(mandatory for all implementations) and Recruitment Event Type (if implementing

Recruitment). These tables can decide the overall fate of the success of implementation.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

Identifying events from your source system and stamping them with Oracle Business

Intelligence known domain values are they key aspects here.

Then configure related COMMON class dimensions applicable for all, like Internal Organizations

(logical/applicable partitions being Department, Company Organization, Job Requisition

Organization, Business Unit etc), or Business Locations (logical/applicable partitions being

Employee Locations) etc.

Consider other shared dimensions and helper dimensions like Status (logical/applicable partition

being Recruitment Status), Exchange Rate etc.

Then consider the code dimensions. By this time you are already aware of what all dimensions

you are considering to implement, and hence, can save time by configuring the CSVs for only the

corresponding code categories.

For facts, start with configuring the Workforce Event fact. Since the dimensions are already

configured, the natural key of the dimensions are already known to you and hence should be

easy to configure them in the fact.

Workforce fact should be followed by subject area specific HR facts, like Payroll, Job Requisition

Event, Applicant Event, Learning Enrollment, and Learning Enrollment Accumulated Snapshot.

Note that Absence fact does not need a CSV file to be populated. It is populated off of the

Absence Event dimension, the Workforce Event fact and the time dimension as a Post Load

process.

Now that all the CSV files for facts, dimensions, and helper tables are populated, you should

move your focus towards the domain value side of things. For E-Business Suite & PeopleSoft

Adaptors, we do mid-stream lookups against preconfigured lookup CSV files. The map between

source values/codes to their corresponding domain values/codes come pre-configured in these

lookup files. However, for Universal Adaptor, no such lookup files exist. This is because of the

fact that we expect that the accurate domain values/codes will be configured along-with

configuring the base dimension tables where they apply. Since everything is from a CSV file,

there is no need to have the overhead of an additional lookup file acting in the middle. Domain

value columns begin with W_ *excluding the system columns like W_INSERT_DT and

W_UPDATE_DT] and normally they are mandatory, cannot be nulls, and the value-set cannot be

changed or extended. We do relax the extension part on a case by case basis, but in no way, the

values can be changed. The recommendation at this stage is that you go to the DMR guide (Data

Model Reference Guide), get the list of table-wise domain values, understand the relationships

clearly in cases there exists any hierarchical or orthogonal relations, identify the tables where

they apply and then their corresponding CSV files, look at the source data and configure the

domain values in the same CSV files. Note that if your source data is in a relational database and

you have chosen to go the alternate route of creating all extract mappings by yourself, the

recommendation is to follow what we have done for E-Business Suite Adaptors and PeopleSoft

Adaptors and create separate domain value lookup CSV files, and do a mid-stream lookup.

Last, but not the least, configure the parameters in DAC (Data warehouse Administration

Console). Read up the documentation for these parameters, understand their expectations,

study your own business requirements and then set the values accordingly.

Oracle Corporation |

Implementing HR Analytics using Universal Adaptors

5. Impact of incorrect configurations of domain values

Domain values constitute a very important foundation for Oracle Business Intelligence Applications. We

use this concept heavily all across the board to equalize similar aspects from a variety of source

systems. The Oracle Business Intelligence Applications provide packaged data warehouse solutions for

various source systems such as E-Business Suite, PeopleSoft, Siebel, JD Edwards and so on. We attempt

to provide a source dependent extract type of a mapping that leads to a source independent load

type of a mapping, followed by a post load (also source independent) type of mapping. With data

possibly coming in from a variety of source systems, this equalization is necessary. Moreover, the

reporting metadata (OBIEE RPD) is also source independent. The metric calculations are obviously

source independent.

The following diagram shows how a worker status code/value is mapped onto a warehouse domain to

conform to a single target set of values. The domain is then re-used by any measures that are based on

worker status.

Active

Inactive

OLTP 1

Active

Suspended

Terminated

Source

Domain

Active

ACTIVE

Inactive

INACTIVE

Active

ACTIVE

Suspended

INACTIVE

Terminated

INACTIVE

Data Warehouse

Active

Measures

OLTP 2

Domain values help us to equalize similar aspects or attributes as they come from different source

systems. We use these values in our ETL logic, sometimes even as hard-coded filters. We use these

values in defining our reporting layer metrics. And hence, not configuring, incorrectly configuring, or

changing the values of these domain value columns from what we expect, will lead to unpredictable

Oracle Corporation |

10

Implementing HR Analytics using Universal Adaptors

results. You may have a single source system to implement, but still you have to go through all the steps

and configure the domain values based on your source data. Unfortunately, this is small price you pay

for going the buy approach VS the traditional build approach for your data warehouse.

One of the very frequently asked question is what is the difference between domain value code/name

pairs VS the regular code/name pairs that are stored in W_CODE_D.

If you look at the structure of W_CODE_D table, it appears to be also capable of standardizing

code/name pairs to something common. This is correct. However, we wanted to give an extensive

freedom to users to be able to do that standardization (not necessarily equalization) of their

code/names and possibly use that for cleansing as well. For example, if the source supplied code/name

are possibly CA/CALIF or CA/California, you can choose the W_CODE_D approach (using Master Code

and Master Map tables see configuration guide for details) to standardize on CA/CALIFORNIA.

Now, to explain the difference of domain value code/name pairs Vs the regular code/name pairs, it is

enough if you understand the significance of the domain value concept. To keep it simple, wherever we

(Oracle Business Intelligence Applications) felt that we should equalize two similar topics that give us

analytic values, metric calculation possibilities etc, we have promoted a regular code/name pair to a

domain value code/name pair.

If we have a requirement to provide a metric called Male Headcount, we cant do that accurately

unless we know which of the headcount is Male and which is Female. This metric therefore has easy

calculation logic: Sum of headcount where sex = Male. Since PeopleSoft can call it M and EBS can have

male, we decided to call it a domain value code/name pair, W_SEX_MF_CODE (available in the

employee dimension table). Needless to say, if you didnt configure your domain value for this column

accurately, you wont get this metric right.

Oracle Corporation |

11

Implementing HR Analytics using Universal Adaptors

6. Impact of incorrect population of CODE-NAME columns

The Oracle BI Applications mostly use Name and Description columns in the out-of-the-box dashboards

and reports. We use Codes only during calculations, wherever required. Therefore, it is obvious that if

the names and descriptions didnt resolve against their codes during the ETL, you will see blank values of

attributes (or in some cases, depending on the DAC parameter setting, you might see strings like

<Source_Code_Not_Supplied> or <Source_Name_Not_Supplied> and so on).

Another point to keep in mind is that all codes should have distinct name values. If two or more codes

have the same name value, at the report level you will see them merged. The metric values may

sometimes appear in different lines of the report, because OBIEE Server typically throws in a GROUP BY

clause on the lowest attribute (code).

Once implemented, you are free to promote the code columns from the logical layer to the presentation

layer. You might do this when you know your business users are more acquainted to the code values

rather than the name values. But that is a separate business decision. The general behavior is not like

that.

Oracle Corporation |

12

Implementing HR Analytics using Universal Adaptors

7. Best practices for extracting incremental changes

Although you can choose to supply the entire dataset during incremental runs, for all practical reasons,

this is not recommended. Firstly because then the ETL has to process all the records and determine what

needs to be applied and what can be rejected. Secondly, the decision ETL takes may not be accurate. ETL

decisions are based on the values of the system date columns like CHANGED_ON_DT,

AUX1_CHANGED_ON_DT, AUX2_CHANGED_ON_DT, AUX3_CHANGED_ON_DT and

AUX4_CHANGED_ON_DT columns only. We do not explicitly compare column-by-column and determine

whether an update is required. We believe that if something has changed, probably one of the four date

columns must have changed. And in that case, we simply update. If all 5 date columns are same, we

pretty much tend to reject. The base of this decision is the correctness of the date columns. If your

source system does not track the last updated date column on a record well enough, it becomes your

responsibility to force and update, no matter what. An easy way to do this is to set SESSSTARTTIME in

one of these columns during extract. This will force to detect a change, and we end up updating.

No wonder, this is not be the best idea. By all means, you should provide the true delta data set during

every incremental run. A small amount of overlap is acceptable, especially when you deal with flat files.

Our generally accepted rules for facts or large dimensions are either:

Customer does their own version of persisted staging so they can determine changes at the

earliest opportunity and only load changes into universal staging tables

If absolutely impossible to determine the delta or to go the persistent staging route,

Customer only does full load. Otherwise doing a full extract every time and processing

incrementally will take longer.

Follow the principles below to decide on your incremental strategy:

(Applies to relational table sources) Does your source system capture last update date/time

accurately in the source record that change? If so, extracting based on this column would be the

best idea. Now, your extract mapping may have used 2 or 3 different source tables. Decide

which one is primary and which ones are secondary. The last update date on the primary table

goes to the CHANGED_ON_DT column in the stage table. The same from the other two tables go

to one of the auxiliary changed on date column in the stage table. If you design your extract

mapping this way, you are almost done. Just make sure you add the filter criteria

primary_table.last_update_date >= $$LAST_EXTRACT_DATE. The value of this parameter is

maintained in DAC.

(Applies to CSV file sources) Assuming that there is a mechanism that you can trust that gives

you the delta file during each incremental load, does the delta file it come with a changed

value of system dates? If yes, youre OK. But if not, then you should add an extra piece of logic in

the out of the box SDE_Universal** mappings that set SESSSTARTTIME to one of the system

date columns. This will force an update (when possible) no matter what.

Oracle Corporation |

13

Implementing HR Analytics using Universal Adaptors

(Applies to CSV file sources) If there are no mechanisms to easily give your delta file during

incremental, and it seems easier to get a complete dump every time, you have actually couple

of choices:

a. Pass the whole file in every load, but run true incremental loads. Note that this is not an

option for large dimensions or facts.

b. Pass the whole file each time and run full load always.

c. Do something at the back-office to process the files and produce the delta file

yourselves.

The choices (a) and (b) may sound a bad idea, but weve seen it to be a worthwhile solution

compared to (c), if the source data volume is not very high. For an HR Analytics

implementation, this could be OK as long as your employee strength is no more than 5000

and you have no more than 5 years of data.

The choice (c) is more involved but produces best results. The idea is simple. You store the

last image of the full file that your source gave you *call A+. You get your new full file today

*call B+. Compare A & B. There are quite a few data-diff software available in the market, or

better if you could write a Perl or python script on your own. The result of this script should

be a new delta file *call C+ that has the lines copied from B that has changed as compared

to A. Use this new file C as your delta data for your incremental runs. Also, discard A and

rename B as A, thereby getting ready for the next batch.

Having said that, it is worthwhile to re-iterate that the Persisted Staging is a better

approach as it is simpler and uses Informatica to do the comparison. Oracle BI Applications

have used this technique in HR adaptors for E-Business Suite and PeopleSoft, in case you

wanted to refer to them.

If there are other options not considered here, by all means, try them out. This list is not

comprehensive, it is rather indicative.

Oracle Corporation |

14

Implementing HR Analytics using Universal Adaptors

8. Detailed understanding of the key HR ETL Processes

8.1.

Core Workforce Fact Process

8.1.1. ETL Flow

Workforce Balance Aggregate

W_WRKFC_BAL_A

Workforce Event Aggregate

W_WRKFC_EVT_A

Dimension Aggregate

W_EMPLOYMENT_STAT_CAT_D

Dimension Aggregate

W_WRKFC_EVENT_GROUP_D

Workforce Month Snapshot Fact

W_ WRKFC_ EVT_ MONTH_F

Month Dimension

W_ MONTH_D

Workforce Merge Fact

W_ WRKFC_ EVT_ MERGE_F

Workforce Age Fact

W_ WRKFC_ EVT_ AGE_F

Workforce Service Fact

W_ WRKFC_ EVT_ POW_F

Period of Work Band Dimension

W_PRD_OF_WRK _BAND _ D

Age Band Dimension

W_ AGE_ BAND_D

Workforce Fact

W_ WRKFC_ EVT_F

Workforce Fact Staging

W_ WRKFC_ EVT_FS

Workforce Event Fact

CSV file input

Oracle Corporation |

15

Implementing HR Analytics using Universal Adaptors

Terminology

Assignment is used to refer to an instance of a person in a job. It should not be an update-able key on

the source transaction system.

Key Steps and Table Descriptions

Table

Primary Sources

Grain

Description

W_WRKFC_EVT_FS

Flat file

Source adaptors

W_WRKFC_EVT_F

One row per assignment

per workforce event

W_WRKFC_EVT_AGE_F

W_AGE_BAND_D

W_WRKFC_EVT_F

One row per assignment

per age band change

W_WRKFC_EVT_POW_F

W_PRD_OF_WRK_BAND_D

W_WRKFC_EVT_F

One row per assignment

per service band change

W_WRKFC_EVT_MERGE_F

W_WRKFC_EVT_F

W_WRKFC_EVT_AGE_F

W_WRKFC_EVT_POW_F

W_WRKFC_EVT_MERGE_F

W_MONTH_D

One row per assignment

per workforce or band

change event

One row per assignment

per change event or

snapshot month

W_WRKFC_EVT_EQ_TMP

W_WRKFC_EVT_FS

One row per changed

assignment

W_WRKFC_EVT_MONTH_EQ

_TMP

W_WRKFC_EVT_EQ_TMP

W_WRKFC_EVT_F

W_MONTH_D

One row per changed

assignment

W_WRKFC_BAL_A_EQ_TMP

W_WRKFC_EVT_MONTH_EQ_T

MP

W_WRKFC_EVT_MONTH_F

W_EMPLOYMENT_STAT_CAT_D

One row per changed

employment

status/category and

snapshot month

W_WRKFC_EVT_A_EQ_TMP

W_WRKFC_EVT_EQ_TMP

W_WRKFC_EVT_MERGE_F

W_EMPLOYMENT_STAT_CAT_D

W_WRKFC_EVENT_GROUP_D

One row per changed

event group/sub group

and employment

status/category

W_EMPLOYMENT_STAT_CAT

W_EMPLOYMENT_D

One row per

Records workforce events

for assignments from

hire/start through to

termination/end. Includes

appraisals, salary reviews

and general changes.

Records age band change

events for each

assignment

Records service band

change events for each

assignment

Merges band change

events with workforce

events for assignments

Adds in monthly snapshot

records along with the

workforce and band

change events.

Reference table for which

assignments have changed

and the earliest change

dates

Expands

W_WRKFC_EVT_EQ_TMP

to include assignments

needing new snapshots

Reference table for which

employment

status/category have

changed and the snapshot

month

Reference table for which

event group/sub groups

have changed and changed

employment

status/category.

This table is an aggregated

W_WRKFC_EVT_F

W_WRKFC_EVT_MONTH_F

Oracle Corporation |

16

Implementing HR Analytics using Universal Adaptors

_D

Employment Status and

category.

W_WRKFC_EVENT_TYPE_D

W_WRKFC_EVENT_TYPE_DS

The grain of this table is

at a single Workforce

Event Type level.

W_WRKFC_EVENT_GROUP_

D

W_WRKFC_EVENT_TYPE_D

One row per Event

group and Event Sub

group

W_WRKFC_BAL_A

W_EMPLOYMENT_STAT_CAT_D

W_WRKFC_EVT_MONTH_F

One row per

employment

status/category and

snapshot month

W_WRKFC_EVT_A

W_EMPLOYMENT_STAT_CAT_D

W_WRKFC_EVENT_GROUP_D

W_WRKFC_EVT_MERGE_F

One row per workforce

event group/event sub

group and employment

status/category

dimension table on the

distinct Employment

Status and Category

available in

W_EMPLOYMENT_D table.

This dimension table

stores information about a

workforce event, such as

the action, whether the

organization or job has

changed, whether it is a

promotion or a transfer,

and so on. This table is

designed to be a Type-1

dimension.

This table is an aggregate

dimension based on the

Event Group and Event

Sub Group in the

W_WRKFC_EVENT_TYPE_

D dimension table.

This is a Balance Aggregate

table based on the

Snapshot Fact table

W_WRKFC_EVT_MONTH_

F.

This is an Events Aggregate

table based on the Base

Event Fact table

W_WRKFC_EVT_MERGE_F

.

Key Setup/Configuration Steps

The following table documents the minimum setup required for the target snapshot fact to be loaded

successfully. For other functionality to work it is necessary to perform other setup as documented by

the installation guide below. If this is not done it may be necessary to re-run initial load after completing

the additional setup.

Oracle Corporation |

17

Implementing HR Analytics using Universal Adaptors

Type

Name

Description

DAC System

Parameter

INITIAL_EXTRACT_DATE

Earliest date to extract data across all facts

HR_WRKFC_EXTRACT_DATE

Earliest date to extract data from HR Facts

HR_WRKFC_SNAPSHOT _DT

Earliest date to generate snapshots for HR

Workforce.

st

This should be set to the 1 of a month.

HR_WRKFC_SNAPSHOT _TO_WID

Current date in WID form should not be changed

Age Band

Age bands need to be defined in a continuous set

of ranges

Period of Work Bands

Period of work bands need to be defined in a

continuous set of ranges

Domains

Notes

1) Workforce extract date should be the earliest date from which HR data is required for reporting

(including all HR facts e.g. Absences, Payroll, Recruitment). This can be later than initial extract

date if other non-HR content loads need an earlier initial extract date.

2) Snapshots should be generated for recent years only in order to improve ETL performance and

reduce the size of the snapshot fact.

8.1.2. Workforce Fact Staging (W_WRKFC_EVT_FS)

When loading the workforce fact staging table from flat file the following information about the rows

and columns to populate should be taken into account.

Workforce Fact Incremental Load

No unnecessary changes should be staged

If there have been no change events for an assignment (instance of a worker in a job) then the fact

staging table should not contain any rows for that assignment. The staging table is used as a reference

for what data has actually changed and needs to be refreshed in the downstream facts. Adding

unnecessary changes will slow down the performance of the incremental refresh.

Oracle Corporation |

18

Implementing HR Analytics using Universal Adaptors

Staging back-dated changes

A back-dated change is a change to historical (i.e. not current) records. This includes correcting the date

of birth or period of work start dates, changes which require the respective band change events to be

recalculated.

When processing back-dated changes it is quite likely that one change will affect many later records. For

example, a correction to the last appraisal rating (or salary) will affect every event since the last

appraisal (or salary review). Changes to dimensions may affect the next records change indicators /

previous dimension key values, and also may need to be carried over to other types of events sourced

from elsewhere.

To correctly process back-dated changes the workforce fact staging table must be populated with the

changed records and all subsequent events for the changed assignment. This can be done in more than

one way, for example:

Always extract changes and subsequent events from the source transaction system

Keep a record in the staging area of each change event type that has previously been processed

in the warehouse

(this method is implemented in Oracle and PeopleSoft adaptors)

Extract only the changes into the workforce fact staging table, and then use the data already

loaded in the workforce fact to fill in the subsequent change events

Workforce Fact Essential Columns

There are certain columns that are essential to the ETL working and must be loaded into the fact staging

table (W_WRKFC_EVT_FS) as a minimum. However in order to get a useful system after running the ETL

it is necessary to populate some measures and dimension keys as well. All of these details are provided

in the associated excel spreadsheet (HR_Analytics_UA_Lineage.xlsx).

Oracle Corporation |

19

Implementing HR Analytics using Universal Adaptors

8.1.3. Workforce Base Fact (W_WRKFC_EVT_F)

Deletes any obsolete fact records

Maintains effective start/end dates

Incremental

only

W_WRKFC_EVT_EQ_TMP

W_WRKFC_EVT_F

Incremental

only

Calculates EFFECTIVE_END_DATE based

on next event date

W_WRKFC_EVT_FS

The workforce base fact is refreshed from the workforce fact staging table.

Effective end date is calculated based on the next event date

Deleted events are removed (subsequent events previously loaded but no longer staged see

Incremental Load section on backdated changes)

Initial Load Sessions

SIL_WorkforceEventFact (loads new/updated records)

Incremental Load Sessions

SIL_WorkforceEventFact (loads new/updated records)

PLP_WorkforceEventQueue_Asg (loads change queue table with changed (staged) assignments

and their earliest change (staged event) date)

PLP_WorkforceEventFact_Mntn (deletes obsolete events see note above)

The date-track (having a continuous non-overlapping set of effective start/end dates per assignment) is

critical to downstream facts and the correct operation of the reports.

Deletes can also be handled separately (see deletes section below) but care needs to be taken to ensure

the date-track is correctly maintained if deleting individual records.

Oracle Corporation |

20

Implementing HR Analytics using Universal Adaptors

8.1.4. Workforce Age Fact (W_WRKFC_EVT_AGE_F)

W_WRKFC_EVT_AGE_F

W_AGE_BAND_D

W_WRKFC_EVT_F

The age fact contains one starting row plus one row each time an assignment moves from one age band

to the next. For example, if the last age band is 55+ years then there will be an event generated for each

assignment on the 55th birthday of the worker (BIRTH_DT + 55 years). Any worker hired beyond the age

of 55 will have no additional band change events, just the starting row.

Note the age bands are completely configurable, but because of the dependencies between the age

bands and the facts any changes to the configuration will require a reload (initial load).

This fact is refreshed for an assignment whenever there is a change to the workers date of birth on the

hire record (or the first record if the hire occurred before the fact initial extract date).

Initial Load Sessions

PLP_WorkforceEventFact_Age_Full (loads new records)

Incremental Load Sessions

PLP_WorkforceEventFact_Age_Mntn (deletes records to be refreshed or obsolete)

PLP_WorkforceEventFact_Age (loads changed records)

Oracle Corporation |

21

Implementing HR Analytics using Universal Adaptors

8.1.5. Workforce Period of Work Fact (W_WRKFC_EVT_POW_F)

W_WRKFC_EVT_POW_F

W_PRD_OF_WRK_BAND_D

W_WRKFC_EVT_F

The period of work fact contains one starting row plus one row each time an assignment moves from

one service band to the next. For example, if the first service band is 0-1 years then there will be an

event generated for each assignment exactly one year after hire (POW_START_DT).

Note the period of work bands are completely configurable, but because of the dependencies between

the service bands and the facts any changes to the configuration will require a reload (initial load).

This fact is refreshed whenever there is a change to the hire record (or first record if the hire was before

the fact initial extract date).

Initial Load Sessions

PLP_WorkforceEventFact_Pow_Full (loads new records)

Incremental Load Sessions

PLP_WorkforceEventFact_Pow_Mntn (deletes records to be refreshed)

PLP_WorkforceEventFact_Pow (loads changed records)

Oracle Corporation |

22

Implementing HR Analytics using Universal Adaptors

8.1.6. Workforce Merge Fact (W_WRKFC_EVT_MERGE_F)

W_WRKFC_EVT_MERGE_F

W_WRKFC_EVT_F

W_WRKFC_EVT_AGE_F

W_WRKFC_EVT_POW_F

This fact contains the change events from the base, age and service facts. It is refreshed based on the

combination of assignments and (earliest) event dates in the fact staging table.

Initial Load Sessions

PLP_WorkforceEventFact_Merge_Full (loads new records)

Incremental Load Sessions

PLP_WorkforceEventFact_Merge_Mntn (deletes records to be refreshed)

PLP_WorkforceEventFact_Merge (loads changed records)

Oracle Corporation |

23

Implementing HR Analytics using Universal Adaptors

8.1.7. Workforce Month Snapshot Fact (W_WRKFC_EVT_MONTH_F)

W_WRKFC_EVT_MONTH_F

Monthly

Snapshots

W_MONTH_D

Workforce

Events

W_WRKFC_EVT_MERGE_F

This fact contains the merged change events plus a generated snapshot record on the first of every

month on or after the HR_WRKFC_SNAPSHOT_DT parameter. To allow future-dated reporting snapshots

are created up to 6 months in advance.

This fact is refreshed based on:

Combination of assignments and (earliest) event dates in the fact staging table

Any snapshots required for active assignments since the last load (e.g. if the incremental load is

not run for a while, or the system date moves into a new month since the last load)

Initial Load Sessions

PLP_WorkforceEventFact_Month_Full (loads new records)

Incremental Load Sessions

PLP_WorkforceEventQueue_AsgMonth (adds to the change queue table any assignments

needing new snapshots since the last load)

PLP_WorkforceEventFact_Month_Mntn (deletes records to be refreshed)

PLP_WorkforceEventFact_Month (loads changed records)

Oracle Corporation |

24

Implementing HR Analytics using Universal Adaptors

8.1.8. Workforce Aggregate Fact (W_WRKFC_BAL_A)

Workforce Aggregate Fact

W_WRKFC_BAL_A

Aggregate Fact is loaded

directly by Employment

Dimension but it still remains

at the grain of

the Employment Stat Cat

Aggregate Dimension

PLP Load Process

FULL

Workforce Dimension Aggregate

W_EMPLOYMENT_STAT_CAT_D

PLP

Dimension

Aggregate Load

FULL and INCR

PLP Load process

INCR only

Workforce Month Snapshot

Fact

Change Queue table

W_WRKFC_BAL_A_EQ_TMP

W_WRKFC_EVT_MONTH_F

PLP

Parent level

Update

FULL and

INCR

Dimension

W_EMPLOYMENT_D

PLP

INCR only

Workforce Month Fact

W_WRKFC_EVT_MONTH_F

Change queue table

W_WRKFC_EVT_MONTH_EQ_TM

P

Aggregate dimension W_EMPLOYMENT_STAT_CAT_D is based on the distinct Employment Status and

Category available in W_EMPLOYMENT_D table.

Aggregate Fact table (W_WRKFC_BAL_A) is based on the Snapshot Fact table W_WRKFC_EVT_MONTH_F

and Aggregate dimension W_EMPLOYMENT_STAT_CAT_D so as to improve performance of Fact table

W_WRKFC_EVT_MONTH_F.

Aggregate Fact W_WRKFC_BAL_A is loaded directly by Dimension W_EMPLOYMENT_ D (essentially

remains at the grain of Dimension Aggregate W_EMPLOYMENT_ STAT_CAT_D) and Workforce Month

Snapshot Fact W_WRKFC_EVT_MONTH_F.

Oracle Corporation |

25

Implementing HR Analytics using Universal Adaptors

Initial Load Sessions

PLP_EmploymentDimensionAggregate_Load_Full (Loads Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D based on the distinct Employment Status and Category

available in W_EMPLOYMENT_D table.

PLP_EmploymentDimension_ParentLevelUpdate_Full(Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D updates parent dimension W_EMPLOYMENT_D table

PLP_WorkforceBalanceAggregateFact_Load _Full (loads new records into the Balance

Aggregate Fact table based on W_WRKFC_EVT_MONTH_F and the Aggregate Dimension

(W_EMPLOYMENT_STAT_CAT_D).Although it gets directly loaded from W_EMPLOYMENT_D,

the Balance Aggregate Fact remains at the grain of the Aggregate Dimension

(W_EMPLOYMENT_STAT_CAT_D))

Incremental Load Sessions

PLP_EmploymentDimensionAggregate_Load (Loads new rows into Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D from current ETL run, based on the distinct Employment Status

and Category available in W_EMPLOYMENT_D table.)

PLP_EmploymentDimension_ParentLevelUpdate(Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D updates parent dimension W_EMPLOYMENT_D table)

PLP_WorkforceBalanceAggregateFact_Load (deletes records that came in the event queue

table (W_WRKFC_BAL_A_EQ_TMP)and loads new records into the Balance Aggregate Fact

table)

PLP_WorkforceBalanceQueueAggregate_PostLoad (Loads Event Queue table

W_WRKFC_BAL_A_EQ_TMP with records based on W_WRKFC_EVT_MONTH_EQ_TMP and

W_WRKFC_EVT_MONTH_F)

Oracle Corporation |

26

Implementing HR Analytics using Universal Adaptors

8.1.9. Workforce Aggregate Event Fact (W_WRKFC_EVT_A)

Workforce Aggregate Fact

W_WRKFC_EVT_A

Aggregate Fact is loaded

directly by Employment

Dimension but it is at the

grain of

the Employment Stat Cat

Aggregate Dimension

PLP Load Process

FULL

Workforce Dimension

Aggregate

W_EMPLOYMENT_STAT_

CAT_D

PLP

Dimension

Aggregate Load

FULL and INCR

Workforce Event Merge

Fact

W_WRKFC_EVT_MERGE

_F

PLP

Parent level

Update

FULL and

INCR

Dimension

W_EMPLOYMENT_D

Aggregate Fact is loaded

directly by Workforce Event

Type Dimension but it is at

the grain of

the Workforce Event Group

Aggregate Dimension

PLP Load process

INCR only

Change Queue table

W_WRKFC_EVT_A_EQ_T

MP

PLP

Dimension

Aggregate Load

FULL and INCR

PLP

INCR only

W_WRKFC_EVT_MERGE_F

Workforce Dimension

Aggregate

W_WRKFC_EVENT_GRO

UP_D

W_WRKFC_EVT_EQ_TMP

PLP

Parent level

Update

FULL and

INCR

Dimension

W_WRKFC_EVENT_TYPE_D

Aggregate dimension (W_EMPLOYMENT_STAT_CAT_D) is based on the distinct Employment Status

and Category available in W_EMPLOYMENT_D table.

Aggregate dimension (W_WRKFC_EVENT_GROUP_D) is based on the Event Group and Event Sub Group

in the W_WRKFC_EVENT_TYPE_D dimension table.

W_WRKFC_EVT_A is an Aggregate Fact table based on the Merged Event Fact table,

W_WRKFC_EVT_MERGE_F , Aggregate dimension W_WRKFC_EVENT_GROUP_D and Aggregate

dimension W_EMPLOYMENT_STAT_CAT_D , to improve performance of W_WRKFC_EVT_MERGE_F .

Aggregate Fact W_WRKFC_EVT_A is loaded directly by Dimension W_EMPLOYMENT_ D (essentially

remains at the grain of Dimension Aggregate W_EMPLOYMENT_STAT_CAT_D), Dimension

W_WRKFC_EVENT_TYPE_D (essentially remains at the grain of Dimension Aggregate

W_WRKFC_EVENT_GROUP_D) and Workforce Fact W_WRKFC_EVT_MERGE_F.

Oracle Corporation |

27

Implementing HR Analytics using Universal Adaptors

Initial Load Sessions

PLP_EmploymentDimensionAggregate_Load_Full (Loads Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D based on the distinct Employment Status and Category

available in W_EMPLOYMENT_D table.)

PLP_EmploymentDimension_ParentLevelUpdate_Full(Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D updates parent dimension W_EMPLOYMENT_D table)

PLP_WorkforceEventGroupDimensionAggregate_Load_Full (Loads Aggregate dimension

(W_WRKFC_EVENT_GROUP_D) based on the Event Group and Event Sub Group in the

W_WRKFC_EVENT_TYPE_D dimension table.)

PLP_WorkforceEventGroupDimension_ParentLevelUpdate (Aggregate dimension

(W_WRKFC_EVENT_GROUP_D) updates EVENT_GROUP_WID of parent level dimension

(W_WRKFC_EVENT_TYPE_D))

PLP_WorkforceEventAggregateFact_Full (loads new records into the Event Aggregate Fact table

(W_WRKFC_EVT_A) based on Workforce Fact table (W_WRKFC_EVT_MERGE_ F), Aggregate

Dimension (W_EMPLOYMENT_STAT_CAT_D) and Aggregate Dimension

(W_WRKFC_EVENT_GROUP_D). Although it gets directly loaded from W_EMPLOYMENT_D and

W_WRKFC_EVENT_TYPE_D, the Balance Aggregate Fact remains at the grain of the Aggregate

Dimensions (W_EMPLOYMENT_STAT_CAT_D and W_WRKFC_EVENT_GROUP_D))

Incremental Load Sessions

PLP_EmploymentDimensionAggregate_Load (Loads new rows into Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D from current ETL run, based on the distinct Employment Status

and Category available in W_EMPLOYMENT_D table.)

PLP_EmploymentDimension_ParentLevelUpdate(Aggregate dimension

W_EMPLOYMENT_STAT_CAT_D updates parent dimension W_EMPLOYMENT_D table)

PLP_WorkforceEventGroupDimensionAggregate_Load (Loads new rows into Aggregate

dimension (W_WRKFC_EVENT_GROUP_D) from current ETL run, based on the Event Group and

Event Sub Group in the W_WRKFC_EVENT_TYPE_D dimension table.)

PLP_WorkforceEventGroupDimension_ParentLevelUpdate(Aggregate dimension

(W_WRKFC_EVENT_GROUP_D) updates parent level dimension (W_WRKFC_EVENT_TYPE_D) )

PLP_WorkforceEventAggregateFact (deletes records that came in the event queue table

(W_WRKFC_EVT_A_EQ_TMP)and loads new records into the Workforce Event Aggregate Fact

table)

PLP_WorkforceEventQueueAggregate_PostLoad (Loads Event Queue table

W_WRKFC_EVT_A_EQ_TMP with records based on W_WRKFC_EVT_EQ_TMP and

W_WRKFC_EVT_F)

Oracle Corporation |

28

Implementing HR Analytics using Universal Adaptors

8.1.10. Handling Deletes

W_WRKFC_EVT_F

Set delete

flag

W_WRKFC_EVT_DEL_F

Records to

be deleted

Any fact record where:

Fact integration key is not in the

primary extract table, or

Fact assignment key is not in the

primary extract table

W_WRKFC_EVT_F_PE

Integration keys

or assignments

Source OLTP

All the standard OBIA mappings are provided for processing deletes (Primary Extract, Identify Deletes,

and Soft Delete). However because of the added complexity of maintaining the date-track (continuous

set of effective start/end dates per assignment) the functionality differs slightly.

There are two types of delete to make a distinction between:

Date-tracked delete a single record is deleted for an assignment, but others remain

Purge all records for an assignment are deleted, the assignment no longer exists on the source

transaction system

These are discussed in more detail below.

Oracle Corporation |

29

Implementing HR Analytics using Universal Adaptors

8.1.11. Propagating to derived facts

The incremental load for derived facts will automatically detect any records deleted via the delete

process (W_WRKFC_EVT_F_DEL). Deleted records will be physically removed from the derived fact

tables as part of the incremental refresh.

8.1.12. Date-tracked Deletes

To delete individual records using the standard delete mappings the primary keys of the fact should be

extracted into the primary extract table. Then the identify delete mapping will compare the primary

extract table with the fact table and the soft delete mapping will flag as deleted any record in the fact

which is not in the primary extract table.

Universal Adaptor

Recommendation is that the same methods are used as for Oracle EBS or PeopleSoft. Always stage the

changed fact records and any subsequent records and allow the incremental fact load to take care of the

deletes.

Alternatively the behavior can be altered, but the burden of maintaining the date-track correctly would

fall to the customer. To alter the behavior so that deletes are done only by the standard delete process

the mapping PLP_WorkforceEventFact_Mntn should be disabled. However, see the worked example

below for the kind of date-track maintenance that is required to keep the fact error free.

8.1.13. Purges

To purge all records for an assignment using the standard delete mappings the distinct assignment ids

should be extracted into the primary extract table. Then the identify delete mapping will compare the

primary extract table with the fact table and the soft delete mapping will flag as deleted all records for

assignments in the fact which are not in the primary extract table.

Oracle Corporation |

30

Implementing HR Analytics using Universal Adaptors

8.1.14. Primary Extract

W_WRKFC_EVT_F_PE

W_WRKFC_EVT_F_PE

DATASOURCE_NUM_ID

INTEGRATION_ID (ASSIGNMENT_ID)

Either / Or

DATASOURCE_NUM_ID

INTEGRATION_ID

Source OLTP

Extract from the source OLTP either the valid assignments or valid integration keys for the fact. The

delete process will delete fact records with no valid assignment (purge) and no valid integration key

(individual record delete). This step can be skipped if there is an alternative method (e.g. source trigger)

of detecting the purges or deletes and pushing the fact keys to delete directly to the

W_WRKFC_EVT_F_DEL table.

The recommendation is to use the purge only extract the distinct valid assignment ids. If the other

option is used then care should be taken to leave the fact consistent. See the worked example below.

8.1.15. Identify Delete

W_WRKFC_EVT_DEL_F

Records to

be deleted

Any fact record where:

Fact integration key is not in the

primary extract table, or

Fact assignment key is not in the

primary extract table

W_WRKFC_EVT_F_PE

W_WRKFC_EVT_F

Oracle Corporation |

31

Implementing HR Analytics using Universal Adaptors

Compares the primary extract table with the fact table to detect purges or deletes. The primary keys of

fact records to be deleted are inserted into the delete table.

This step can be skipped if there is an alternative method (e.g. source trigger) of detecting the purges or

deletes and pushing the fact keys to delete directly to the W_WRKFC_EVT_F_DEL table.

Incremental Load Sessions

SIL_WorkforceEventFact_IdentifyDelete

8.1.16. Soft Delete

W_WRKFC_EVT_F

Set delete

flag

W_WRKFC_EVT_DEL_F

This updates the delete flag to Y (Yes) for fact records in the delete table.

Incremental Load Sessions

SIL_WorkforceEventFact_SoftDelete

Oracle Corporation |

32

Implementing HR Analytics using Universal Adaptors

8.1.17. Date-Tracked Deletes - Worked Example

The recommended way of handling date-tracked deletes in the workforce fact is to always stage

changed records (in the case of a delete the previous record) and allow the fact incremental load

mappings handle the changes. The following example shows what can happen if the fact is not

maintained correctly when deleting records.

W_WRKFC_EVT_F

Suppose after initial load the following data was loaded in the fact table for assignment 1:

Assignment Start Date

End Date

Change Type

Organization

Salary

01-Jan-2000

31-Dec-2000

HIRE

5000

01-Jan-2001

31-Dec-2001

REVIEW

6000

01-Jan-2002

31-Dec-2002

TRANSFER

6000

01-Jan-2003

01-Jan-3714

REVIEW

7000

Now suppose the transfer record was deleted on the source transaction system. If this was handled by

the primary extract identify delete soft delete mappings then there would be the following records

left in the fact table (delete flag = N):

Assignment Start Date

End Date

Change Type

Organization

Salary

01-Jan-2000

31-Dec-2000

HIRE

5000

01-Jan-2001

31-Dec-2001

REVIEW

6000

01-Jan-2003

01-Jan-3714

REVIEW

7000

This is wrong on two counts:

1. The date-track is not continuous, so downstream ETL may fail or lose data. Also reports in

Answers may not return data for the gaps in the date-track.

2. The data is not consistent since the transfer has been deleted the REVIEW on 01-Jan-2003

should not still be showing organization B.

The first issue would be reasonably simple to fix with an update (either pushing the updated record into

the fact staging table, or if directly updating the fact it would be necessary to track the event (effective

start) date of the updated row in W_WRKFC_EVT_EQ_TMP).

However the second issue is more complex. By allowing the fact incremental load to take care of the

deletes these issues are avoided.

Oracle Corporation |

33

Implementing HR Analytics using Universal Adaptors

8.2.

Recruitment Fact Process

8.2.1. ETL Flow

Recruitment Job

Requisition Aggregate

W_RCRTMNT_RQSTN_A

Recruitment Applicant

Aggregate

W_RCRTMNT_APPL_A

Recruitment Hire

Aggregate

W_RCRTMNT_HIRE_A

Recruitment Pipeline Fact

W_RCRTMNT_EVENT_F

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Applicant Event Fact

W_APPL_EVENT_F

Job Requisition Event Fact Staging

W_JOB_RQSTN_EVENT_FS

Applicant Event Fact Staging

W_APPL_EVENT_FS

Job Requisition Event

Fact CSV file input

Applicant Event Fact

CSV file input

Terminology

Assignment is used to refer to an instance of a person in a job. It should not be an update-able key on

the source transaction system.

Applicant is used to refer to the person who is applying for the posted vacancy. He/she can be an

existing employee, an ex-employee of the organization or an external candidate.

Hiring Manager is used to refer to the person to whom the incumbent would report to, once hired.

Oracle Corporation |

34

Implementing HR Analytics using Universal Adaptors

Key Steps and Table Descriptions

Table

Primary Sources

Grain

Description

W_JOB_RQSTN_FS

Flat file

Source adaptors

W_APPL_EVENT_FS

Flat file

Source adaptors

W_JOB_RQSTN_ACC_SNP_F

W_JOB_RQSTN_F

W_APPL_ACC_SNP_F

W_APPL_EVENT_F

W_RCRTMNT_EVENT_F

W_JOB_RQSTN_F

W_APPL_EVENT_F

W_JOB_RQSTN_ACC_SNP_F

W_APPL_ACC_SNP_F

One row per job

requisition per job

requisition event

per event date

One row per

application per job

requisition event

per event date and

sequence

One row per job

requisition

One row per

application

One row per

recruitment event

type per event

date and sequence

W_RCRTMNT_RQSTN_A

W_RCRTMNT_EVENT_F

W_MONTH_D

Records job requisition events

for all job requisitions from

open through to

close/fulfillment.

Records application events for

all applications from applying,

screening, selection though

offer extension, hire or

termination of the application.

Records job requisition related

event dates, de-normalized.

Records application related

event dates, de-normalized.

Merges the job requisition

events and application events

along with de-normalized event

dates. Also known as the

Recruitment Pipeline fact.

Aggregates the job requisition

related metrics at a monthly

grain.

W_RCRTMNT_APPL_A

W_RCRTMNT_EVENT_F

W_MONTH_D

W_RCRTMNT_HIRE_A

W_RCRTMNT_EVENT_F

W_MONTH_D

One row per job

requisition per

recruitment event

month

One row per

applicants

demographics per

recruitment event

month

One row per hired

applicants

demographics per

recruitment event

month

Aggregates the applicant

related metrics at a monthly

grain.

Aggregates the applicant

related metrics with a focus on

hired applicants only at a

monthly grain.

Key Setup/Configuration Steps

All the set up and configuration steps that are required for core Workforce also applies for Recruitment

(see the same section for Workforce). For Universal adaptors, there are no more extra configuration

steps required as long as the domain values are configured accurately.

Oracle Corporation |

35

Implementing HR Analytics using Universal Adaptors

8.2.2. Job Requisition Event & Application Event Facts (W_JOB_RQSTN_EVENT_F &

W_APPL_EVENT_F)

These two tables are loaded via the corresponding Universal Staging tables (W_JOB_RQSTN_EVENT_FS

and W_APPL_EVENT_FS) via the flat files. The columns to be populated, mandatory or non-mandatory

and all other related information exists in the associated excel spreadsheet

(HR_Analytics_UA_Lineage.xlsx).

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Job Req. Age

Band Events

(FULL)

Applicant

Generated

Events (FULL)

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Applicant Event Fact

W_APPL_EVENT_F

FULL load

process (Job

Req.)

FULL load

process

(Applicant)

Job Requisition Event Fact

Stage

W_JOB_RQSTN_EVENT_FS

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Job Req. Age

Band Events

(INCR)

Applicant Event Fact Stage

W_APPL_EVENT_FS

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Applicant

Generated

Events (INCR)

Applicant POW

Events (INCR)

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Applicant Event Fact

W_APPL_EVENT_F

INCR load

process (Job

Req.)

INCR load

process

(Applicant)

Job Requisition Event Fact

Stage

W_JOB_RQSTN_EVENT_FS

Applicant Event Fact Stage

W_APPL_EVENT_FS

Oracle Corporation |

36

Implementing HR Analytics using Universal Adaptors

Initial Load Sessions

SIL_JobRequisitionEventFact_Full (loads new records)

SIL_ApplicantEventFact_Full (loads new records)

PLP_JobRequisition_AgeBandEvents_Full (deletes and creates requisition age band change

event records for those job requisitions that are supposed to enter a new requisition age band

based on their current age since opening, and applies this new event to the job requisition

accumulated snapshot fact)

PLP_ApplicantEventFact_GeneratedEvents_Full (deletes and generates pseudo applicant

events that were not supplied by source system, but necessary for the complete analysis of the

recruitment pipeline process)

Incremental Load Sessions

SIL_JobRequisitionEventFact (updates changed records and loads new records)

PLP_JobRequisition_AgeBandEvents (deletes and creates requisition age band change event

records for those job requisitions that are supposed to enter a new requisition age band based

on their current age since opening and applies this new event to the job requisition

accumulated snapshot fact)

SIL_ApplicantEventFact (updates changed records and loads new records)

PLP_ApplicantEventFact_GeneratedEvents_Full(deletes and generates pseudo applicant events

that were not supplied by source system, but necessary for the complete analysis of the

recruitment pipeline process)

PLP_ApplicantEventFact_PeriodOfWorkEvents (generates pseudo period-work-work-bandcrossing events in case a hired applicant crosses his/her first period of work band; this is

something that the source does not give and we do it for all applicants assuming all of them will

be hired and stay for the first period-of-work-band timeframe)

8.2.3. Job Requisition Accumulated Snapshot Fact (W_JOB_RQSTN_ACC_SNP_F)

This table stores the de-normalized dates against various job requisition events from the Job Requisition

Events base fact table. After the pseudo Age Band Change events are populated in the base Job

Requisition fact table, those dates are also reflected in the Accumulated snapshot fact table. Any

changes to the Hiring Manager Position Hierarchy are also updated in this accumulated snapshot fact.

Note that the updates because of hierarchy changes do not apply during full ETL run.

Oracle Corporation |

37

Implementing HR Analytics using Universal Adaptors

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Job Req. Load

(FULL & INCR)

Job Req. Age

Band Events

(FULL & INCR)

Age Band Dimension

W_AGE_BAND_D

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Position Hierarchy Update Process

(INCR ONLY)

Position Hierarchy Post Change Temporary

W_POSITION_DH_POST_CHG_TMP

Position Hierarchy Pre Change Temporary

W_POSITION_DH_PRE_CHG_TMP

Initial Load Sessions

PLP_JobRequisition_AccumulatedSnapshot_Full (loads new records)

PLP_JobRequisition_AgeBandEvents_Full (after the age-band-change events are recorded in

the base Job Requisition fact table, this mapping updates the related date column in the

accumulated snapshot fact table)

Incremental Load Sessions

PLP_JobRequisition_AccumulatedSnapshot (updates changed records and loads new records)

PLP_JobRequisition_AgeBandEvents(after the age-band-change events are recorded in the

base Job Requisition fact table, this mapping updates the related date column in the

accumulated snapshot fact table)

PLP_JobRequisition_AccumulatedSnapshot_PositionHierarchy_Update (changes in the Hiring

Manager Position Hierarchy due to regular or back dated changes are applied to the

accumulated snapshot fact table)

8.2.4. Applicant Accumulated Snapshot Fact (W_APPL_ACC_SNP_F)

This table stores the de-normalized dates against various applicant events from the Applicant Events

base fact table. After the pseudo Age Band Change, Period of Work Band Change and other Missing

Oracle Corporation |

38

Implementing HR Analytics using Universal Adaptors

recruitment pipeline events are populated in the base Applicant Event fact table those dates are also

reflected in the Accumulated snapshot fact table.

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Applicant

Load (FULL

& INCR)

Period of

Work Band

Events

(FULL &

INCR)

Period of Work Band Dimension

W_PRD_OF_WRK_BAND_D

Applicant Event Fact

W_APPL_EVENT_F

Initial Load Sessions

PLP_Applicant_AccumulatedSnapshot_Full (loads new records)

Incremental Load Sessions

PLP_Applicant_AccumulatedSnapshot (updates changed records and loads new records)

8.2.5. Recruitment Pipeline Event Fact (W_RCRTMNT_EVENT_F)

This is the main Recruitment Pipeline event fact table that is used for the reporting needs, and also is

used to build aggregate tables at three different grains for reporting purposes. The main purpose of this

table is to merge both sides of the recruitment events (job requisition events as well as applicant events)

and on top of that provide some value added metrics.

At first, the image of this table is captured prior to loading any data into an Event Queue table, the

purpose of which is to track all changes that are about to happen in this current run. Since this preimage is captured by comparing to the main pipeline fact table, this pre-imaging process does not

apply during full ETL run. Note that this pre-imaging process occurs from both sides (job requisition

events as well as applicant events) and apart from the event queue table; these processes also populate

Oracle Corporation |

39

Implementing HR Analytics using Universal Adaptors

another temporary table (W_RCRTMNT_EVENT_F_TMP) which comes in handy during aggregate

building.

Next, the data from either side are brought into the pipeline fact. The Event queue table drives the

merge process during incremental runs to get better performance. Once the data is loaded, a postimage process captures the image of the loaded pipeline fact table and writes it to the temporary table

W_RCRTMNT_EVENT_F_TMP. This becomes the driver table for the rest of three aggregate building.

Recruitment Pipeline Fact

W_RCRTMNT_EVENT_F

Full load

process

(Job Req.)

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Full load

process

(Applicant)

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Applicant Event Fact

W_APPL_EVENT_F

Process flow for INITIAL Load of the Recruitment Pipeline fact

Oracle Corporation |

40

Implementing HR Analytics using Universal Adaptors

Recruitment Pipeline Event Queue

W_RCRTMNT_EVENT_F_EQ_TMP

Pre Image

Process

(Applicant)

Pre Image

Process

(Job Req.)

Recruitment Pipeline Temporary

W_RCRTMNT_EVENT_F_TMP

Recruitment Pipeline Fact

W_RCRTMNT_EVENT_F

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Applicant Event Fact

W_APPL_EVENT_F

Recruitment Pipeline Event Queue

W_RCRTMNT_EVENT_F_EQ_TMP

Incremental load

process (Job Req.)

Incremental load

process (Applicant)

Recruitment Pipeline Fact

W_RCRTMNT_EVENT_F

Job Requisition Accumulated

Snapshot Fact

W_JOB_RQSTN_ACC_SNP_F

Applicant Accumulated Snapshot

Fact

W_APPL_ACC_SNP_F

Job Requisition Event Fact

W_JOB_RQSTN_EVENT_F

Applicant Event Fact

W_APPL_EVENT_F

Recruitment Pipeline Temporary

W_RCRTMNT_EVENT_F_TMP

Post

Image

Process

Recruitment Pipeline Fact

W_RCRTMNT_EVENT_F

Process flow for INCREMENTAL Load of the Recruitment Pipeline fact

Oracle Corporation |

41

Implementing HR Analytics using Universal Adaptors

Initial Load Sessions

PLP_RecruitmentEventFact_Applicants_Full (loads new records)

PLP_RecruitmentEventFact_JobRequisitions_Full (loads new records)

Incremental Load Sessions

PLP_RecruitmentEventFact_Applicants_PreImage (takes a pre-image of the pipeline fact before

the new applicant events are loaded; in other words, determine what is about to change)

PLP_RecruitmentEventFact_JobRequisitions_PreImage (takes a pre-image of the pipeline fact

before the job requisition events are loaded; in other words, determine what is about to

change)

PLP_RecruitmentEventFact_Applicants (deletes old records that came in the event queue and

re-processes and re-inserts them; new records are inserted)

PLP_RecruitmentEventFact_JobRequisitions (deletes old records that came in the event queue

and re-processes and re-inserts them; new records are inserted)

PLP_RecruitmentEventFact_PostImage (takes a post-image of the pipeline fact after all the

changes are done to it)

8.2.6. Recruitment Job Requisition Aggregate Fact (W_RCRTMNT_RQSTN_A)

This table stores aggregated measures applicable to Job Requisitions, at a monthly level. The load of this

table drives from the Pipeline fact the temporary table W_RCRTMNT_EVENT_F_TMP that was populated

during the process of loading the Pipeline fact.

During full load, the metrics get aggregated into a temporary table W_RCRTMNT_RQSTN_A_TMP2,

which gets subsequently updated to set the effective to date column of the end aggregate table, and

finally gets loaded to the end aggregate table.

During incremental, an additional process, driven by the pre-populated temporary table

W_RCRTMNT_EVENT_F_TMP that tracks the changes affected in the Pipeline fact in the current ETL run,

loads yet another temporary table W_RCRTMNT_RQSTN_A_TMP1. The following aggregation of metrics

to the second temporary table W_RCRTMNT_RQSTN_A_TMP2 is similar to that of the full load, and so

are the remaining processes (updating effective to dates, and loading the end aggregate table).